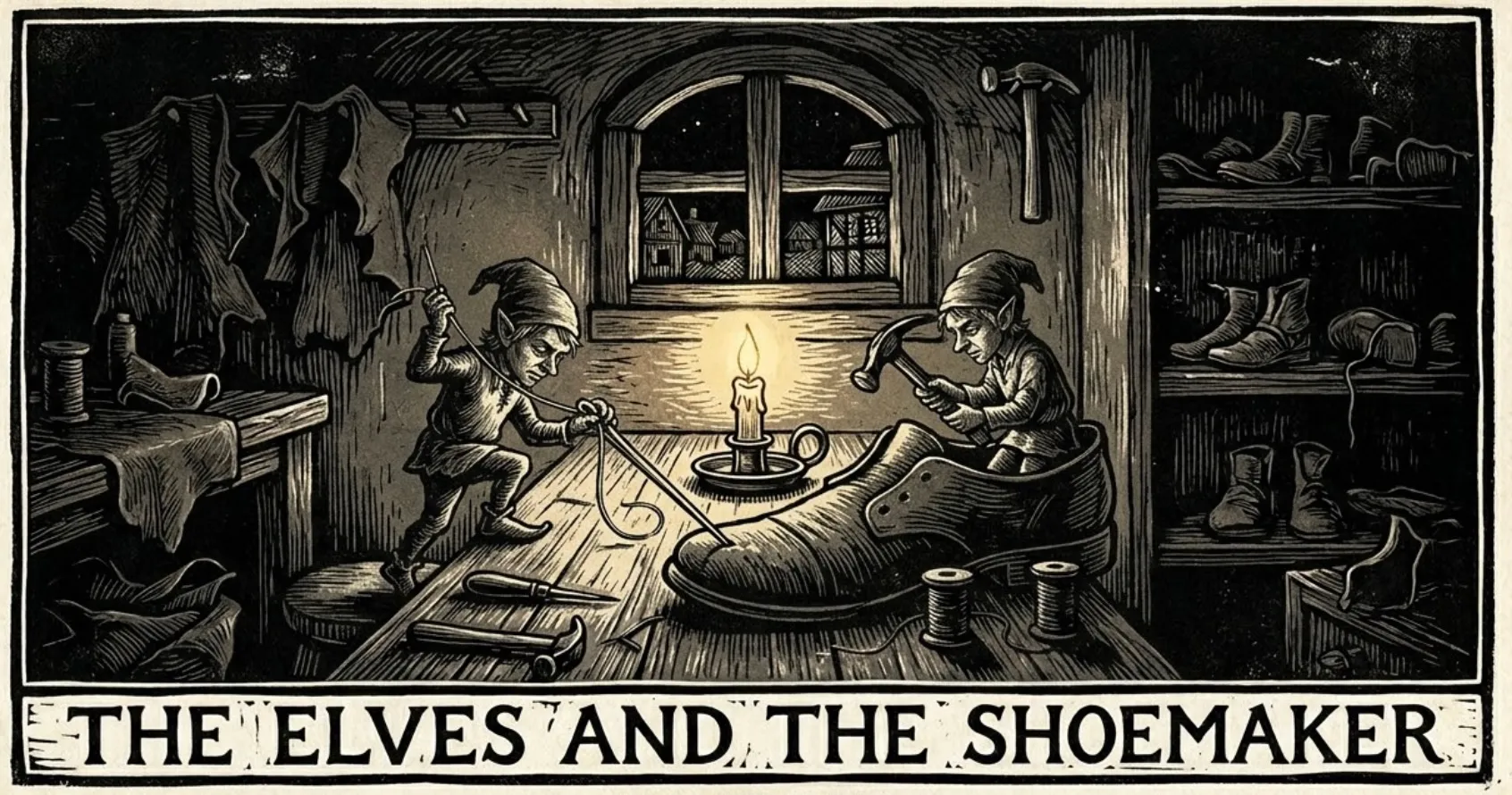

I’ve been running a thought experiment about my agentic software projects. If agents were to do work at night, unprompted, what would be the best gift they could give you to wake up to in the morning? There’s a fairy story about a shoe maker who leaves some scraps lying around after a busy day of work, and some elves come along and make some shoes from them. This is the kind of thing I’m thinking about.

This is distinct from the projects where you spend time creating plans during the day for agents to work on at night. And it’s different from autoresearch coming up with something after working tirelessly in a loop. The part of this that intrigues me is whether agents could do work unbidden and produce delightful gifts that were unexpected.

Some ideas that lead to this conclusion (where “people” may just refer to me):

- Asking agents to do stuff is potentially going to be an anti-pattern

- People are messy when they are exploring ideas

- People don’t like having to spend time cleaning up

- People often have new ideas as they see stuff being built

- People want to capture ideas while they are fresh which means not pausing to think about quality

- People need to do steps in serial to see how they affect work downstream

- People are bad at remembering ideas they had and plans they made

There seem to be a couple of ways of thinking about working with agents:

- Creating skills and harnesses to try to stick to a good process

- Creating detailed plans with little room for errors

- Reviewing code that agents create to find problems

Some of these things become serious bottlenecks when moving at agent speed. I’ve realised that I’m the bottleneck that stops more of my ideas from being built.

What would this look like if it were true

This could materialise in a number of different ways, depending on your level of trust. At the least trusting end of the spectrum, you would wake up to a bunch of proposals from your night elves. These would then need to be acted on. On the more trusting end of the spectrum, the elves have done significant work — created features that were implicit or extrapolated from the recent work you’ve been doing.

Between these points are numerous other ideas:

- Improving the quality of code

- Improving test coverage / effectiveness

- Organising documentation

- Doing expensive QA reviews and highlighting UX friction

- Examining workflow friction and improving human / agent tooling

- Organizing / prioritising backlog

- Implementing fixes for bug reports

These can be done while a human is in the driving seat, but it feels like a waste of their time.

What I want to spend time doing is using the software that’s been built and thinking of ways to improve it. Sometimes that means experimenting with different ideas. Sometimes it’s just thinking about abstractions that make the model work better.

Another analogy for this kind of work is what our brains do when we’re asleep and dreaming. They organise memories and make our context more useful when we awaken in the morning. We don’t do this consciously. It would be a massive pain if we did.

How would the elves know what to do?

Fixing tech debt

Some of the work that elves could do is routine, especially improving the quality of code. One of the major gripes about agentic working is that they make a mess with slop. They are given plans to execute and they follow them with the path of least resistance. They don’t come up with elegant new abstractions as part of that because they often weren’t asked to.

This is broadly the same as humans working on projects. The difference is that humans have ego and identity wrapped up in the quality of the work they produce. This causes pain, which is a motivator to improve the situation. Often teams will petition for time to clean up technical debt. Product leaders who ignore these requests often find velocity falls.

My thesis is that agents can improve the quality of code if given the right directions. This is not to say that they need to be given the exact code to change. Rather that they should be given the right kind of instructions about what good looks like.

At the simplest, this means running analysis scripts on the codebase, fixing the results. Working at a slightly higher level means asking it the kinds of questions that architects might ask themselves and their teams: “what kind of an application is this? What workload is it handling? What are the right ways of structuring this to be maintainable and scalable?”. This is an oversimplification, but it’s not wildly off the mark.

Proactive product development

The nicer thing that could be available in the morning would be actual product improvements. Certainly for me, in the kind of projects that I’ve been building, I make a plan and have to step through it mostly sequentially with an agent.

But this is not universally true. There are many ideas that I’ve discussed with an agent that probably contain enough information to do them reasonably well. The issue is that I’ve forgotten that we discussed it. An example is that in my city generation project, I asked whether some of our calculations could be processed by a GPU more quickly than we were doing on the CPU. The answer was yes, but we didn’t do it at that point because I was trying to make the thing work in the current form.

The elves could also look at recent conversation history to find implicit ideas and either make them explicit by creating plans for them, fitting them into the backlog, re-writing the aspirational spec to include them, or even building them. The general thesis is that it’s probably about the same amount of effort for an agent to build a prototype with its own reasoned assumptions about what I was thinking, as it is to do a whole lot of back and forth over a spec. It can say “Btw, I built a version of that GPU stuff you mentioned. Looking at the results, it does seem to speed things up a lot”.

What stops us from working like this?

There are several points that conspire to stop us living the dream.

- We haven’t worked out how to frame the problem in the right way. We’re too focussed on asking agents to do plans and tasks. So we just improve our tooling to get better at that.

- We don’t trust agents to actually make improvements without our explicit guidance. We feel that since they make so much mess when given clear directions, the mess would be much worse if we gave them less direction.

- Conflicts and dependencies are painful to manage. If a bunch of agents worked autonomously, how would we merge all their output?

I’m considering a couple of ideas around this:

- We need to maintain a good source of truth of what our application is meant to do. Agents can help with this.

- We need to have good tests. Agents can help with this if we have a good source of truth.

- We need to have good contracts in our architecture so that different parts can be changed without too many consequences

- We should be able to have agents merge their branches to a single place (whether main, or some other branch), without review.

This last point is quite important. I’m advocating the “ask forgiveness”, rather than “seek permission” model of development that is favoured by tech companies (and sometimes mocked and hated by those outside).

A key point is that if this autonomous work is happening without a human involved, the agents can take their time. They can have things grilled by adversarial agents. They can take screenshots and analyse them. They can run expensive fuzz tests.

Agentic slop is exacerbated by working under time pressure with an impatient human who wants to move on to the next idea.

Appendix: the elves and the shoemaker

The elves and the shoemaker story is from Brothers Grimm. A shoemaker is poor and only has enough leather for a single pair of shoes. He leaves it out, and in the morning finds that elves have crafted beautiful shoes. He sells these for a good price, gets more leather and the same thing happens. At one point, he and his wife are curious about who is doing the work and stay up to watch. They find out the elves aren’t wearing any clothes, and in an act of reciprocation, make some for them. The elves take the clothes and delighted, prance out of the door never to be seen again. I’m not sure what this tale predicts about our eventual relationship with AI.