I made a new project yesterday. It was based on the ideas in my post about shoe making elves. I started by getting it to read that post, as well as looking at my previous projects octopoid, octoclean and octowiki. I’d seen that Claude had a new mechanism for running code on a schedule, and it clicked with an idea I’d had bouncing around: that proactive agents could be instructed like NPCs in a video game, using a behaviour tree.

I basically provided all these ideas to Claude and asked it to write a spec in the wiki of the project. Then we worked out an entry point for getting shoemaking elves to self-bootstrap the project from the specification and build it. The idea evolved over a few iterations. Some of it worked, some of it didn’t. I’ll leave that for a separate post.

Pragmatic agents and incremental thought

As part of that process, I was trying to see if agents could balance forward product momentum, with conscientious cleaning. I wanted to see if some of the effort could go into reducing code smells and keeping slop at bay. It seemed to do a bit of this, but it felt very incremental.

This prompted an interesting chain of thought: what is an elegant solution? As humans, we love an elegant solution to a problem. I’m not sure how I’d describe this though, let alone ask an agent to increase the “elegance” of the project. I gave the example of the behaviour tree as something that was pretty neat about the shoe maker project. It’s a whole different reframing of the approach to agentic work compared with the annoying statemachine I battled with in octopoid.

The bit that really made me think though was that Claude said “you thought of that idea because of your experiences with software development” (or words to that effect).

In actuality, Claude has massively more experience than I do from its training data. Why then does it not come up with creative solutions to problems? It can quickly piece together the pieces if it is asked to, but most of the time it’s not asked. If it is, it is often paired with a competing directive that it deems more important to follow.

When thinking about the system, I had reasoned from analogy: remembering the story of the elves and the shoemaker, and thinking about how that would be a nice way of having work done. I’d also had experience with behaviour trees in the past, though I’m not sure what made them pop back into my attention.

The point that seems interesting to me is that as humans, we are bombarded by random concepts as we make our way through the world. In addition to this, we have our projects and our problems bubbling around in our head even if we are doing something else. Often unconsciously, we examine our ideas through weird combinatorial lenses - eg taking fairy tales and comparing them with the work we’re doing.

The leading question I had coming out of this was whether Claude could be prompted to work creatively in this way. I’ve previously tried things like asking it to think about the gang of four patterns and see whether they apply to a codebase, but this is something different.

Escaping local maxima

As background, I’ve already been running experiments in my city generator project — getting agents to run different versions of a pipeline so we can compare results in a kind of Darwinian way.

The conclusion I came to is that maybe randomising a few variables is not enough. Maybe Claude should be asked to pull in a randomised higher level concept and look at the work it’s doing through that new lens.

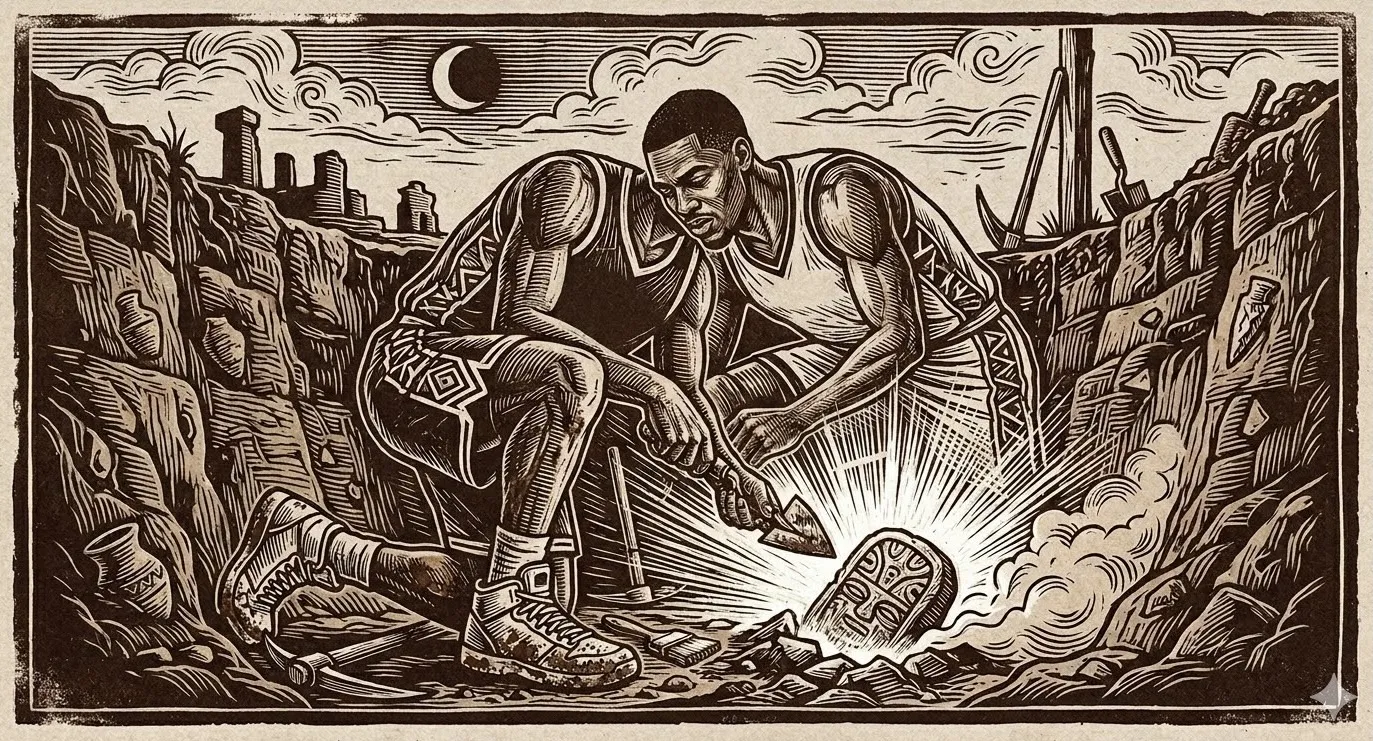

The experiment made me laugh and slightly question my sanity. I asked Claude to read a random wikipedia page and then look again at the shoemaker project through that lens. The first one was about archeology 1. Claude suggested that the activities of the night elves could be seen as layers with transitions between them being particularly interesting for human reviewers in the morning. I’m not sure if it’s on to something, or completely insane.

The second was about the NCAA 2. It latched onto the idea of consensus and suggested that autonomous agents could use it to agree on what the application’s invariants are.

I’m still trying to think through this idea. It would create a lot of junk — as is the case with humans engaged in creative day dreaming. At the same time, it might come up with seeds of novel improvements that surprise and delight us. The concepts I’m thinking of at the moment are: how can we seed ideas, how can we challenge those ideas, and how do we let the best ideas improve and become part of our systems.

Claude’s ideas about the code base inspired by random wikipedia articles:

Footnotes

-

Archaeological Sections — In archaeology, a profile (also called a section) is a view in part of the archaeological sequence showing it in the vertical plane, as a cross section, and thereby illustrating its profile and stratigraphy. This may make it easier to view and interpret as it developed over time.

The concept: in archaeology, a section is a vertical cross-section through layers of earth. You cut into the ground and look at the side wall. Each layer represents a different time period — the deeper you go, the older. By reading the layers, you understand the sequence of events: what was built, what was destroyed, what was built on top.

How this connects to shoe-makers:

The shift log is already a stratigraphic record — each entry is a layer showing what happened. But we only read it forwards (chronologically). The archaeological insight is that the most interesting information is in the boundaries between layers — where one period ends and another begins. Where an elf’s approach changed. Where a finding caused a pivot. Where the spec diverged from the code.

More specifically: the branch is a section through the project’s history. When the human reviews in the morning, they’re doing archaeology — cutting through layers of elf work and trying to understand the sequence. Right now they get a flat shift log. What if they got an actual profile — a structured view showing:

- Layer 1: explore (found 5 candidates)

- Layer 2: prioritise (picked invariant X, here’s why)

- Layer 3: execute (implemented X, 3 files changed)

- Layer 4: review (approved with one note)

- Boundary: elf session changed here

- Layer 5: explore (found the previous approach was wrong)

- Disruption layer: spec changed, elf pivoted

The disruption layers are the most interesting — they show where assumptions broke. The current shift log buries these in chronological noise. A “section view” would highlight them.

Also: archaeologists don’t just dig randomly. They dig where they expect to find something interesting — at boundaries, transitions, anomalies. The explore step could do the same: instead of reading everything equally, look for boundaries in the codebase — where style changes, where conventions break, where one developer’s code meets another’s. That’s where the bugs and design debt hide.

Not sure any of this is directly actionable right now, but the “section view of the shift log” idea is interesting — it would make the morning review much more useful. ↩

-

1992 NCAA Men’s Basketball All-Americans — The Consensus 1992 College Basketball All-American team, as determined by aggregating the results of four major All-American teams. To earn “consensus,” a player must be selected by a majority of independent selectors — AP, USBWA, UPI, NABC. No single selector determines the outcome. The consensus emerges from agreement across multiple viewpoints.

This directly maps to the invariant evidence problem. Right now, a single evidence pattern (string match) determines whether a claim is “implemented.” That’s like one selector choosing the All-American team. It’s easy to game — impress one voter.

What if invariant evidence required consensus? Multiple independent checks must agree:

- Code evidence: does the function/pattern exist? (current approach)

- Test evidence: does a test exercise this behaviour? (current approach)

- Behavioural evidence: does running the system produce the expected outcome? (missing)

- Review evidence: has a reviewer confirmed this works? (missing)

A claim is only “implemented-tested” when a majority agree. If the code pattern exists but no test exercises it and no reviewer has confirmed it, it’s “implemented-untested” at best — even if the string match says otherwise.

This would make cheating much harder. You can’t just add a function name and call it implemented. You’d need the function, a real test, AND either a passing integration check or a reviewer sign-off.

The practical version: add a consensus field to the evidence YAML. Instead of just source and test, add behaviour (a command to run that checks the outcome) and reviewed (a finding or critique that confirms it). The claim’s status is determined by how many evidence types agree.

This one feels more directly actionable than the archaeology one. The consensus model for invariant verification would genuinely solve the gaming problem. ↩